The COVID-19 pandemic has made health and social care services think differently. We are no exception.

The pandemic has made clear that some of the ways we currently work prevent us from being flexible and responding to situations as they happen. Following on from the consultation on our new strategy and ambitions launched earlier in January, we’re now proposing some specific changes that will enable us to deal with ongoing challenges from the pandemic and move us towards our ambition to be a more dynamic, proportionate and flexible regulator.

Our inspection reports and ratings give a view of quality that’s vital for the public, service providers and stakeholders. We want to introduce changes to allow us to assess and rate services more flexibly, so we can update our ratings more often in a more accessible, responsive and proportionate way. The changes will make ratings easier to understand for everyone.

The proposals we’re consulting on in part 1 apply to all health and care sectors that we regulate (including those that we don’t have the powers to rate). In part 2, we set out how we’ll be engaging with you in the future when we make changes to the way we regulate.

Part 1: Our proposals for change

Assessing and rating quality

What we do now

Our site visits are an important part of how we assess the quality of services. They enable us to observe care and the culture in a service (particularly services at risk of developing a closed culture, by a closed culture we mean a poor culture that can lead to harm, which can include breaches of human rights such as abuse). Site visits can also help us to check whether the information we have reflects people’s experience of care. Under our current ways of working, we must always carry out a site visit in order to assess quality and rate a service.

However, site visits are not the only way to assess quality and update ratings. We already use information from a wide range of sources to support our judgements (for example, national data sets, information from other organisations and partners, and feedback from the public). Good quality information is already available for specific types of service, such as NHS services, where it helps us to judge key questions such as the effectiveness of services – all of which can be gathered without a site visit.

There’s also more use of digital technology in health and social care, enabling services to deliver care remotely, for example online primary care services. We’ve seen more services taking advantage of this during the pandemic. We now have an opportunity to think differently about how we assess quality in these types of services, as a site visit can’t do this.

The pandemic has highlighted the need for our assessment activity to be more targeted and focused, which depends less on ‘physical’ site inspections and pre-inspection information requests (for example Provider Information Requests (PIRs) in hospitals). In the last 11 months we’ve been exploring and testing new ways of working, including enhanced monitoring (such as our Emergency Support Framework and Transitional Regulatory Approach), targeted inspections and gathering evidence without physically crossing the threshold. For example, we’re currently testing ways of assessing home care providers and GP practices without visiting the premises.

When we’ve needed to visit a service during the pandemic, inspections have been targeted and focused on areas of highest concern wherever possible, which minimises the time our teams spend on site.

For services that we rate, how often we inspect is determined primarily by the current rating for the service or provider. These rules vary across the sectors that we regulate. They mean that our regulation can’t be as flexible and responsive as it needs to be. The frequency rules also rely on on-site inspections as the primary way for us to carry out our work.

What we want to do differently

Assessing quality

We want to move away from using comprehensive, on-site inspection as the main way of updating ratings (or assessing quality in services where we don’t rate). Instead, we want to use wider sources of evidence, tools, and techniques to assess quality. This includes where we’ve gathered appropriate evidence following focused or targeted inspections, assessments without a site visit, and if we need to take significant enforcement action to protect people. But inspection will remain an important part of how we assess quality – we’ll still carry out an on-site inspection where we have information about significant risks to people’s safety, and to ensure we protect the rights of vulnerable people.

Our proposed change means we’ll make more use of information that we hold to update ratings, and it won’t be necessary to always carry out a site visit if we want to update a rating. If we need to ask health and care providers for information before an inspection, the requests will be targeted and proportionate. We’ll carry on using more targeted inspections to enable us to work in this more focused and proportionate way.

We’ll carry on developing the approach to assessing home care providers and GP practices without visiting the premises, moving towards updating ratings on the basis of this activity. We’ll use learning from these pilots to consider how we can apply this approach to other health and care settings.

What do you think?

1. Assessing quality

We propose to assess quality and rate services by using a wider range of regulatory approaches – not just on-site or comprehensive inspections.

Question 1a. To what extent do you support this approach?

Question 1b. What impact do you think this proposal will have?

Reviewing and updating ratings

We want a less rigid approach that allows us to update ratings more often when we recognise changes in quality and to make our on-site inspections more targeted and flexible. This will enable us to provide a more up-to-date overview of the quality of care across England. The changes that we want to make mean we won’t return to using the current inspection frequencies that are published on our website and the type of large inspections associated with this approach.

We want to stop describing frequency of assessment in terms of ‘inspection’, and instead by how often we review quality and update ratings. So, we’ll focus on reviewing, confirming and changing ratings in a variety of ways – this won’t just be limited to after a physical on-site inspection or a full assessment of quality. Being able to update ratings without an on-site inspection means we’ll be able to use the expertise and professional judgement of our inspectors in a more flexible way.

We’ll use the best available information about quality in a service to review ratings more often. This will include a better understanding of people’s feedback and experiences of care, and using a combination of targeted inspections, national and local data and insight from other organisations and partners, and from our relationships with care services and their own self-assurance and accreditation. All this will enable us to reflect changes in quality more quickly than we did before – we know this is important to the public, service providers and stakeholders.

We currently do not have the powers to rate primary care dental services, so inspection frequencies for these providers can’t be linked to a previous rating. We’ll continue to assess practice locations, selecting them for inspection on the basis of risk and sampling of services.

For all these changes to happen we’ll need to further develop our assessment frameworks and publish information to explain how often we’ll update ratings in a consistent and proportionate way. This work will sit alongside wider changes in how we assess quality to help us deliver our new strategy later in the year. We’ll work with partners across health and social care on these developments and will keep you up to date with changes as soon as we can, explained in a clear way.

What do you think?

2. Reviewing and updating ratings

Rather than following a fixed schedule of inspections, we propose to move to the more flexible, risk-based approach set out in this section for how often we assess and rate services.

Question 2a. To what extent do you support this approach?

Question 2b. What impact do you think this proposal will have?

Changing how we rate GP practices and NHS trusts

In our new strategy, we have an ambition to evolve our ratings to make them easier to understand, more relevant and accessible for health and care providers and people who use services. We want to get going on this now, starting with changes to simplify how we rate GP practices and NHS trusts, and how we aggregate ratings.

At the moment, we are not proposing any changes to how we aggregate ratings in adult social care as part of this consultation.

GP practices

What we do now

As well as inspecting and rating GP practices for the five key questions, we currently also assess people’s experiences of care in six population groups (older people; people with long-term conditions; families, children and young people; working age people; people whose circumstances make them vulnerable; and people experiencing poor mental health). We give a rating for each population group against the effective and responsive key questions. The two ratings are then aggregated to reach an overall rating for these population groups.

We introduced this in 2014 when we began rating GP practices, and made changes in 2018 to improve our approach to rating the population groups. The intention of rating practices in this way was to reflect the various needs of different people when they access primary medical care and to reflect any variation in the quality of care people receive from GP practices.

What we want to do differently

After evaluating this approach, and listening to feedback from GP practices, national stakeholders and our own inspectors, we propose to stop providing ratings for individual population groups because:

- There is little variation in ratings for the different population groups, as they are usually influenced by evidence and judgements about the quality of care that affects all the people using a GP practice.

- Our current approach to rating GP practices is too complex and we are committed to considering our regulatory impact and to keeping our approach as simple as possible.

- Providing care to specific population groups is often influenced by wider local health systems; we want to reflect this in developing our approach to primary care networks and the wider health and care system.

In 2021, we plan to introduce simplified ratings for GP practices at two levels:

Level 1: A rating for each key question for the location/service. This will be based on relevant evidence of how GP practices personalise people’s care and provide care for different groups of people.

Level 2: An overall rating for the service. This will be an aggregated rating informed by our findings at level 1.

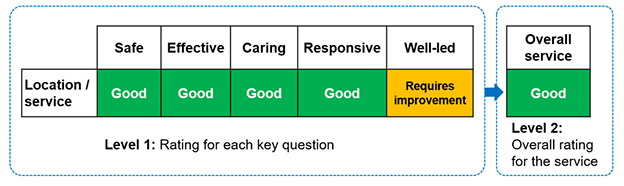

Figure 1 shows an example of how the simplified ratings, without aggregated ratings for population groups, could look for a GP practice.

Figure 1: Example of proposed rating of a GP practice

The table shows:

At level 1, the rating against each key question for the location or service.

Safe, effective, caring and responsive are all rated good. Well-led is requires improvement.

At level 2, this gives an overall rating of good.

This change doesn’t mean that we’ll stop looking at how practices provide personalised and proactive care to their local populations and consider people’s different needs when receiving primary medical care. This will still be a key part of our assessment activity. It means that we won’t provide an additional and separate rating for different groups of people for the effective and responsive key questions.

What do you think?

3. Rating GP practices and population groups

We propose to stop providing separate and distinct ratings for the six population groups when rating GP practices.

Question 3a. To what extent do you support this approach?

Question 3b. What impact do you think this proposal will have?

NHS trusts

What we do now

As well as inspecting and rating core services in NHS trusts, we also inspect and rate the well-led key question at trust level (and the trust’s use of resources, where applicable). We use our aggregation principles and the professional judgement of our inspection teams to rate the other four key questions at trust level. We then aggregate these to produce a rating of the overall quality of the trust.

We introduced our approach to rating NHS trusts in 2013 and introduced the assessment of the well-led key question at trust level in 2017. This was to support the proven link between a trust’s leadership and culture with the delivery of safe, high-quality care. Along with NHS Improvement, we used an agreed updated framework to assess the well-led key question.

To evaluate this approach, we have listened to feedback from NHS trusts, the public, national stakeholders and our own inspectors, and we now propose to change how we rate NHS trusts. We want to simplify our approach to rating at the trust level because:

- The approach is too complex, and the aggregation can conceal variation in the quality of services.

- Aggregated ratings rely on inspection at a service level and can become out of date quickly.

- Aggregated ratings do not always reflect the way people experience services and care.

What we want to do differently

We propose to simplify ratings for NHS trusts by publishing a single rating at the overall trust level, rather than multiple levels of complex, aggregated ratings. This will enable us to focus on the culture and leadership of an organisation, as well as the services where people receive care.

This single rating will be based on our overall assessment of the organisation’s performance against the well-led key question, including findings from service-level assessments. We’ll continue to develop our approach to assessing the well-led key question at trust level to make sure that we look at the overall organisational performance on quality and safety effectively as part of that assessment.

Once we implement this approach, we will no longer publish separate trust-level ratings for the safe, effective, caring and responsive key questions. We will continue to publish those ratings at service and location level. This will provide a clear view of the quality of those services at the level that is relevant to people who use the service.

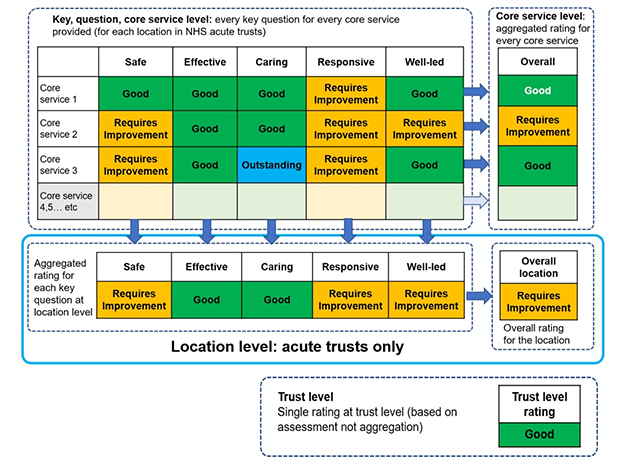

Figure 2 shows an example of how the simplified ratings could look with a single rating at the overall trust level for an NHS trust.

Figure 2: Example of proposed ratings for NHS trusts

The table shows a rating against each key question for each core service the trust provides.

For core service 1, safe, effective, caring and well-led are all rated good. Responsive is requires improvement. This gives an overall rating of good for this core service.

For core service 2, safe, responsive and well-led are all rated requires improvement. Effective and caring are rated good. This gives an overall rating of requires improvement for this core service.

For core service 3, safe and responsive are rated requires improvement. Effective and well-led are good. Caring is outstanding. This gives an overall rating of good for the core service.

All other core services would be rated in the same way, with a rating for each key question and an overall rating for the core service.

This also gives an aggregated rating for each key question at location level. Using the example ratings above, this means:

- Safe is rated requires improvement.

- Effective is rated good.

- Caring is rated good.

- Responsive is rated requires improvement.

- Well-led is rated requires improvement.

The overall rating for the location is requires improvement.

The trust level rating is a single rating based on assessment and not aggregation. In this case, it's good.

Over 2021, we’ll be working with partners at NHS England and Improvement to explore how to evolve the future approach to overall quality ratings for NHS trusts. We’ll work with service providers and partners across the sector on this important area and will keep you up to date with developments as soon as we can explained in a clear way.

What do you think?

4. Rating NHS trusts

We propose to remove aggregation for NHS trust level ratings and replace with a single trust-level rating, based on a development of our current assessment of the well-led key question for a trust.

Question 4a. To what extent do you support this approach?

Question 4b. What impact do you think this proposal will have?

Measuring the impact on equality

We need to consider equality and human rights in all our work, so we’ve produced a draft equality and human rights impact assessment. It identifies the opportunities and risks for doing this through our proposals. Importantly, it identifies the actions we’ll take to minimise the risks and make positive change happen.

What do you think?

5. Measuring the impact on equality

Question 5. We'd like to hear what you think about the opportunities and risks to improving equality and human rights in our draft equality impact assessment. For example, you can tell us your thoughts on:

- Whether the proposals will have an impact on some groups of people more than others, such as people with a protected equality characteristic.

- Whether any impact would be positive or negative.

- How we could reduce or remove any negative impacts.

Part 2: How we’ll engage with you in the future

The way we currently consult and engage on any changes to our methods is a long process and means we can’t implement changes and tell you about them quickly enough.

What we do now

Under section 46 of the Health and Social Care Act 2008, we must prepare, publish, and consult on a statement that explains how we’ll assess the performance of health and care service providers. Historically, our approach has involved publishing and consulting on detailed information describing our entire processes for each type of service, with large public consultations usually taking place over a 12-week period.

This approach goes beyond the requirements of the Act and has limited our ability to be as dynamic and responsive as we want to be.

What will be different

We’re changing the way we consult and engage with you on regulatory changes. To meet our statutory duties under the Health and Social Care Act 2008 we will still publish a statement that explains how we’ll assess the performance of health and care service providers.

However, going forward, we’ll be able to hear people’s views constantly through a range of ways, making it easier for us to design solutions together with all our stakeholders in real time as we develop our future ways of regulating.

This means you’ll see fewer large-scale formal consultations, but more on-going opportunities to contribute as we’ll engage in different ways. Where we do need to consult on areas under section 46 of the Act, our consultations will be more targeted and responsive. So, rather than consultations that ask about many diverse areas of our work, we’ll have more targeted conversations about things that affect you, for example through focus groups and our online CitizenLab platform. This will help us to respond more quickly to changes in health and care.

Importantly, it will mean we’ll spend less time planning for formal consultations and more time listening to you.

In future, the guidance we provide about how we regulate will be more focused on the key areas that service providers and the public need to know about. Where we make changes to the way we carry out our work, we’ll tell you about them as soon as we can and explain them clearly. Our engagement activity and the information we publish will be more accessible and easier to understand.

Our promise to keep you informed and involved

- We’ll always meet our statutory duties to consult under the Health and Social Care Act 2008.

- We’ll engage with you in a proportionate, targeted and responsive way when we’re making changes to the information and guidance we publish about how we assess quality.

- Our information will be up to date and the latest version will always be available on our website in an accessible and easy to understand format.

How to respond to this consultation

Thank you for taking the time to tell us what you think about our proposals for more flexible and responsive regulation. It’s important for us to get your views so we can work together to develop our methods.

Please respond by 5pm on Tuesday 23 March 2021.

The quickest and easiest way to respond is through our online form.

If you can’t use the online form, you can respond by email to: regulatorychanges@cqc.org.uk

Or you can post your response free of charge to:

Freepost RSLS-ABTH-EUET

Regulatory Changes Consultation

Care Quality Commission

Citygate

Gallowgate

NEWCASTLE UPON TYNE

NE1 4WH

Our consultation

Consultation on changes for flexible regulation

Downloads

Consultation on changes for more flexible and responsive regulation - consultation document

Please tell us what you think about our changes to how we check services - easy read

Tell us what you think

The quickest and easiest way to respond is through our online form.

Current consultations are listed on our main consultations page.

Browse other closed consultations.